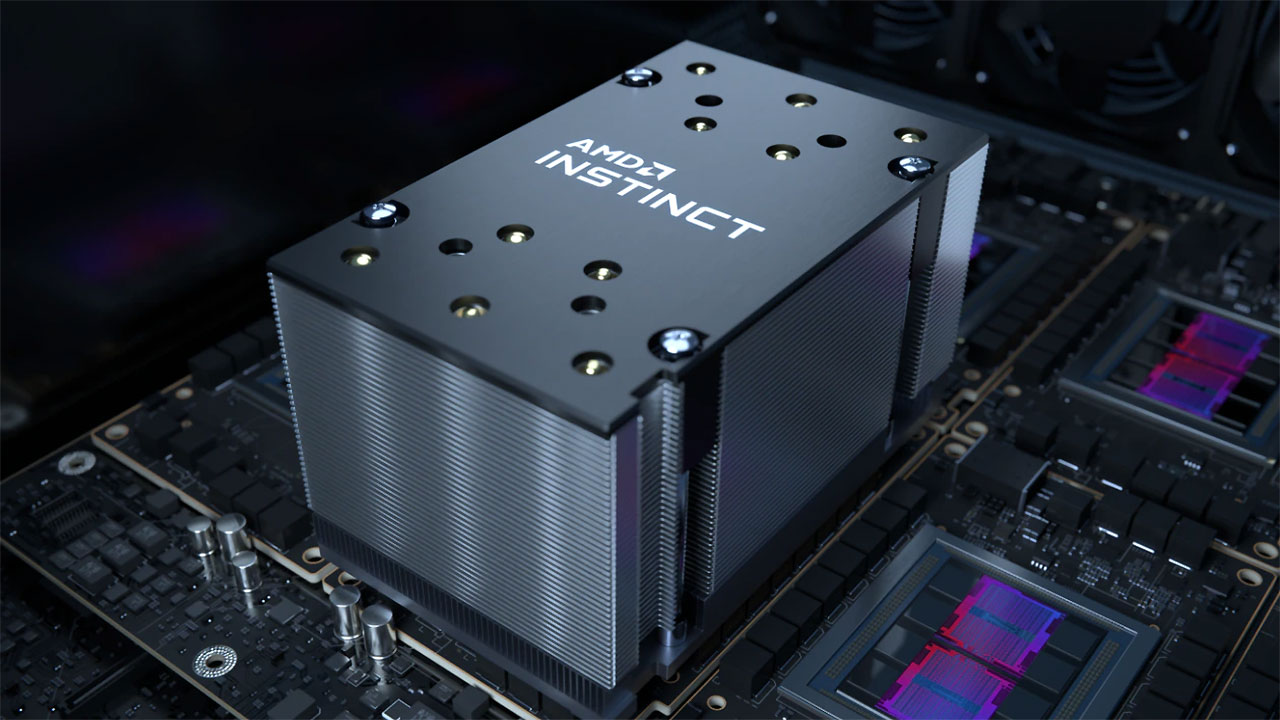

AMD has announced its new Instinct MI200 series accelerators designed for HPC and AI applications. The new series includes the Instinct MI250X, which is said to deliver up to 4.9X better performance than competitive accelerators for double precision (FP64) HPC applications and surpasses 380 teraflops of peak theoretical half-precision (FP16) for AI workloads.

“AMD Instinct MI200 accelerators deliver leadership HPC and AI performance, helping scientists make generational leaps in research that can dramatically shorten the time between initial hypothesis and discovery,” said Forrest Norrod, senior vice president and general manager, Data Center and Embedded Solutions Business Group, AMD. “With key innovations in architecture, packaging and system design, the AMD Instinct MI200 series accelerators are the most advanced data center GPUs ever, providing exceptional performance for supercomputers and data centers to solve the world’s most complex problems.”

Here are some of the key features and capabilities of AMD’s new Instinct MI200 series accelerators:

- AMD CDNA 2 architecture – 2nd Gen Matrix Cores accelerating FP64 and FP32 matrix operations, delivering up to 4X the peak theoretical FP64 performance vs. AMD previous gen GPUs. ,3,4

- Leadership Packaging Technology – Industry-first multi-die GPU design with 2.5D Elevated Fanout Bridge (EFB) technology delivers 1.8X more cores and 2.7X higher memory bandwidth vs. AMD previous gen GPUs, offering the industry’s best aggregate peak theoretical memory bandwidth at 3.2 terabytes per second. 4,5,

- 3rd Gen AMD Infinity Fabric technology – Up to 8 Infinity Fabric links connect the AMD Instinct MI200 with 3rd Gen EPYC CPUs and other GPUs in the node to enable unified CPU/GPU memory coherency and maximize system throughput, allowing for an easier on-ramp for CPU codes to tap the power of accelerators.

Exascale With AMD

AMD, in collaboration with the U.S. Department of Energy, Oak Ridge National Laboratory, and HPE, designed the Frontier supercomputer expected to deliver more than 1.5 exaflops of peak computing power. Powered by optimized 3rd Gen AMD EPYC CPUs and AMD Instinct MI250X accelerators, Frontier will push the boundaries of scientific discovery by dramatically enhancing performance of AI, analytics, and simulation at scale, helping scientists to pack in more calculations, identify new patterns in data, and develop innovative data analysis methods to accelerate the pace of scientific discovery.

“The Frontier supercomputer is the culmination of a strong collaboration between AMD, HPE and the U.S. Department of Energy, to provide an exascale-capable system that pushes the boundaries of scientific discovery by dramatically enhancing performance of artificial intelligence, analytics, and simulation at scale,” said Thomas Zacharia, director, Oak Ridge National Laboratory.

Software for Enabling Exascale Science

AMD ROCm is an open software platform allowing researchers to tap the power of AMD Instinct accelerators to drive scientific discoveries. The ROCm platform is built on the foundation of open portability, supporting environments across multiple accelerator vendors and architectures. With ROCm 5.0, AMD extends its open platform powering top HPC and AI applications with AMD Instinct MI200 series accelerators, increasing accessibility of ROCm for developers and delivering leadership performance across key workloads.

Through the AMD Infinity Hub, researchers, data scientists and end-users can easily find, download and install containerized HPC apps and ML frameworks that are optimized and supported on AMD Instinct accelerators and ROCm. The hub currently offers a range of containers supporting either Radeon Instinct MI50, AMD Instinct MI100 or AMD Instinct MI200 accelerators including several applications like Chroma, CP2k, LAMMPS, NAMD, OpenMM and more, along with popular ML frameworks TensorFlow and PyTorch. New containers are continually being added to the hub.

The AMD Instinct MI250X and AMD Instinct MI250 are available in the open compute accelerator module or OCP Accelerator Module (OAM) form factor while the the AMD Instinct MI210 will be available in a PCIe card form factor in OEM servers.

The AMD Instinct MI250X accelerator is also available from HPE in the HPE Cray EX Supercomputer, and additional AMD Instinct MI200 series accelerators are expected in systems from major OEM and ODM partners in enterprise markets in Q1 2022, including ASUS, ATOS, Dell Technologies, Gigabyte, Hewlett Packard Enterprise (HPE), Lenovo, Penguin Computingand Supermicro.

More information on AMD Instinct MI200 series accelerators can be found on here.

| Models | Compute Units | Stream Processors | FP64 | FP32 Vector (Peak) | FP64 | FP32 Matrix (Peak) | FP16 | bf16 (Peak) | INT4 | INT8 (Peak) | HBM2e ECC Memory | Memory Bandwidth | Form Factor |

|---|---|---|---|---|---|---|---|---|---|

| AMD Instinct MI250x | 220 | 14,080 | Up to 47.9 TF | Up to 95.7 TF | Up to 383.0 TF | Up to 383.0 TOPS | 128GB | 3.2 TB/sec | OCP Accelerator Module |

| AMD Instinct MI250 | 208 | 13,312 | Up to 45.3 TF | Up to 90.5 TF | Up to 362.1 TF | Up to 362.1 TOPS | 128GB | 3.2 TB/sec | OCP Accelerator Module |